Welcome back to another Articles by Victoria, the place where I randomly write things I'm curious about.

Over the past year, I have found myself increasingly uncomfortable with how casually we (and the media) throw around the phrase "AI bubble." It has become the default framing for almost any conversation about generative AI.

You'll likely come across this phrase whenever funding rounds get larger, whenever another company announces an AI feature, whenever layoffs happen in the same quarter as an automation announcement, someone inevitably says, "This feels like a bubble."

And to be fair, the instinct is understandable. We have lived through enough hype cycles to recognize the signs. Like when capital floods in, expectations become unrealistic, headlines get louder than fundamentals, and then eventually the market corrects. The dot com era burned itself into institutional memory so when we see massive AI valuations and nonstop announcements, it feels familiar.

After reading The State of AI: How Organizations Are Rewiring to Capture Value by McKinsey & Company, I had some thoughts.

A quick note

This report is based on a global online survey conducted in July 2024, with 1,491 respondents across 101 countries. The participants span industries, regions, company sizes, and seniority levels, including C suite executives, senior managers, and midlevel leaders. About 42% of respondents work in organizations with more than 500 million dollars in annual revenue, so a significant portion of the data reflects large, established enterprises rather than early stage startups.

The results are also weighted by each country's contribution to global GDP, which means the findings aim to represent the broader global economic landscape. In other words, when we talk about 78% of organizations using AI, we're largely talking about structured companies with real governance, compliance requirements, and complex operational systems.

What is a Bubble?

An economic bubble tends to have the following traits:

* Excitement is high about something new like a new technology but the underlying value is weak

* Investors put in lots of money into this something mostly because they fear missing out

* In a bubble, hype grows faster than actual results or benefits

* Classic signs: soaring valuations, flashy announcements, lots of attention but little long-term impact

When a bubble bursts, we are referring to the phenomenon when:

* Prices or valuations drop sharply after rising too fast

* Hype and expectations collapse because reality doesn't match the excitement

* People quickly pull back their investment, interest, or adoption

* Projects, companies, or initiatives that were driven by FOMO fail or shrink rapidly

* Overall, the market or sector sees a sudden loss of confidence and momentum

So the question: Are we in a AI bubble? is not an easy question to answer. To move beyond speculation, let's look at some hard data from McKinsey. Their survey gives us insight into how organizations are actually using AI, where value is being created, and where challenges remain. This evidence helps us understand whether the excitement is just hype or something more substantial.

Adoption is no longer experimental

One of the most striking data points in the report is that 78% of organizations now use AI in at least one business function, while 71% report regular use of generative AI in at least one function. This is not fringe experimentation happening in innovation labs or hackathons. This is mainstream adoption across IT, marketing and sales, service operations, product development, and software engineering.

When adoption crosses this kind of threshold, the conversation shifts. We are no longer debating whether AI will enter the enterprise. It already has. The real question becomes whether organizations are using it superficially or structurally.

At the same time, the report is clear that bottom line impact remains limited at the enterprise level. More than 80% of respondents say they are not yet seeing tangible enterprise wide EBIT impact from generative AI. That gap between widespread adoption and limited measurable enterprise impact is precisely where bubble narratives tend to grow. From the outside, it can look like enthusiasm without returns.

However, what the data reveals underneath that surface is much more nuanced.

The hype and the promises sold

Much of the early excitement around AI has focused on large language models, or LLMs. They make for flashy demos, attention-grabbing headlines, and bold promises about transforming work overnight. It's easy to see why many organizations rushed to integrate LLMs into their tools and workflows.

Companies that rely solely on LLMs without thinking about how work actually flows often see limited results. McKinsey's data confirms this. The biggest driver of measurable impact from generative AI isn't the sophistication of the model or the number of pilots a company runs. It's workflow redesign.

Long Term effect: Workflow Redesign impacts success of AI deployment

In the report, McKinsey analyzed 25 different organizational attributes and their relationship to bottom line impact from generative AI. The single strongest driver of EBIT impact was not model sophistication, not the number of pilots, not how aggressively companies marketed their AI initiatives. It was workflow redesign.

21% of organizations using generative AI report that they have fundamentally redesigned at least some workflows as part of deployment. That number may not sound dramatic at first glance, but if you have ever worked inside a large organization, you know how difficult fundamental workflow redesign actually is. It requires cross functional alignment, operational mapping, retraining, governance adjustments, and change management discipline.

That detail fundamentally changes how I think about the so called bubble. Bubbles are characterized by surface level adoption driven by fear of missing out. Structural redesign is driven by long term strategy. When companies start rethinking how work flows through their systems, they are making decisions that will shape cost structures, talent requirements, and performance metrics for years to come.

AI adoption is a leadership problem, not just technical

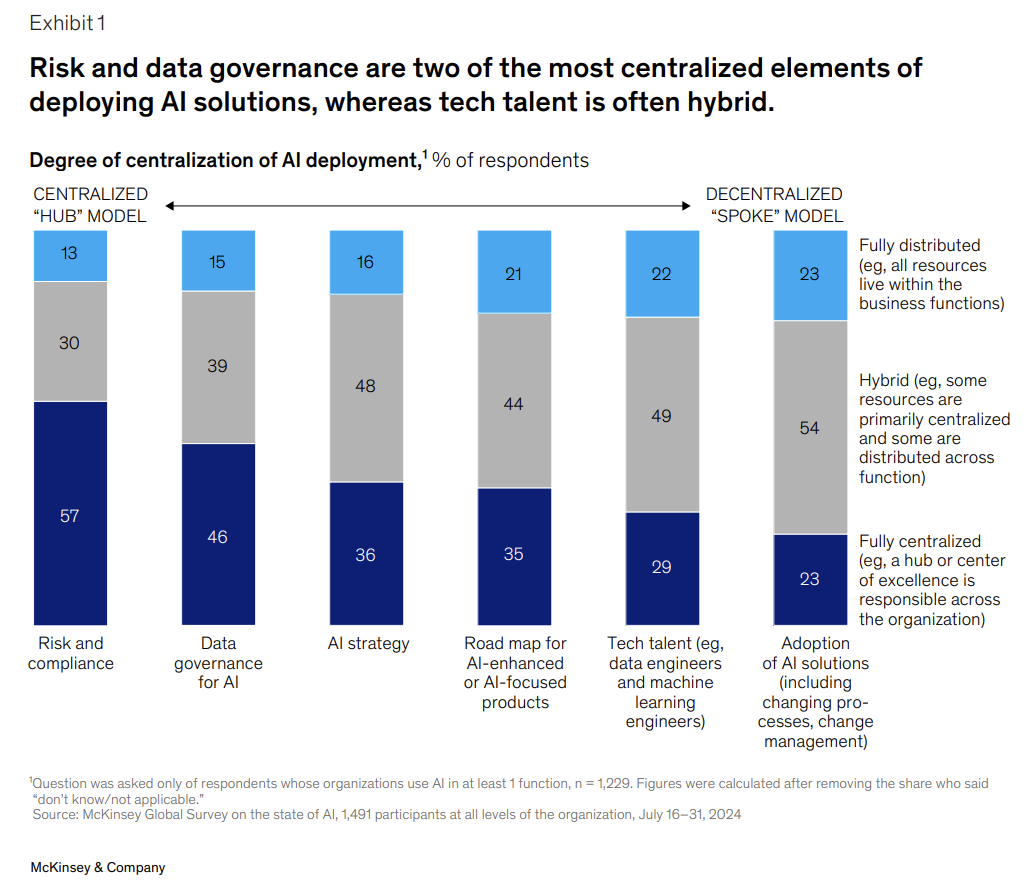

Another element that stood out to me is governance. 28% of organizations report that their CEO oversees AI governance, and in many large organizations, boards are directly involved. The report emphasizes that CEO oversight is strongly correlated with higher self reported bottom line impact from generative AI.

This is important because it signals where AI sits in the power structure of the organization. When technology is delegated entirely to only engineering teams, it often remains tactical.

As a Solutions Engineer Lead, I sit at an interesting intersection. Because I'm in conversations with stakeholders who are trying to figure out how to operationalize AI inside messy, real world systems.

What I've noticed is that the companies that see meaningful impact are not the ones chasing the flashiest demo. They are the ones with their C-suite leaders willing to sit through uncomfortable conversations about ownership, process redesign, data quality, and measurement. They ask who is accountable for this workflow. They ask how we will measure whether this actually improves outcomes. They are prepared to change how teams operate, not just layer AI on top.

That gap in execution feels more significant to me than the gap in technology.

Risk Mitigation is accelerating

One of the defining characteristics of speculative hype cycles is underestimation of risk. Companies move quickly, often overlooking governance until problems accumulate. In contrast, this report shows organizations actively increasing mitigation efforts around inaccuracy, cybersecurity, intellectual property infringement, privacy, and regulatory compliance.

47% of organizations report experiencing at least one negative consequence from generative AI use. That statistic is significant because it demonstrates that companies are not operating under the illusion that AI is flawless. They are encountering real operational friction. Yet instead of retreating, they are building centralized governance structures, hiring AI compliance specialists, and implementing review mechanisms for outputs.

27% of respondents say their organizations review all generative AI outputs before usage, while a similar proportion review very little. That variation reflects experimentation with oversight models, but the existence of formal review structures indicates seriousness. Organizations are not blindly deploying systems without guardrails.

The Real Data on Workforce

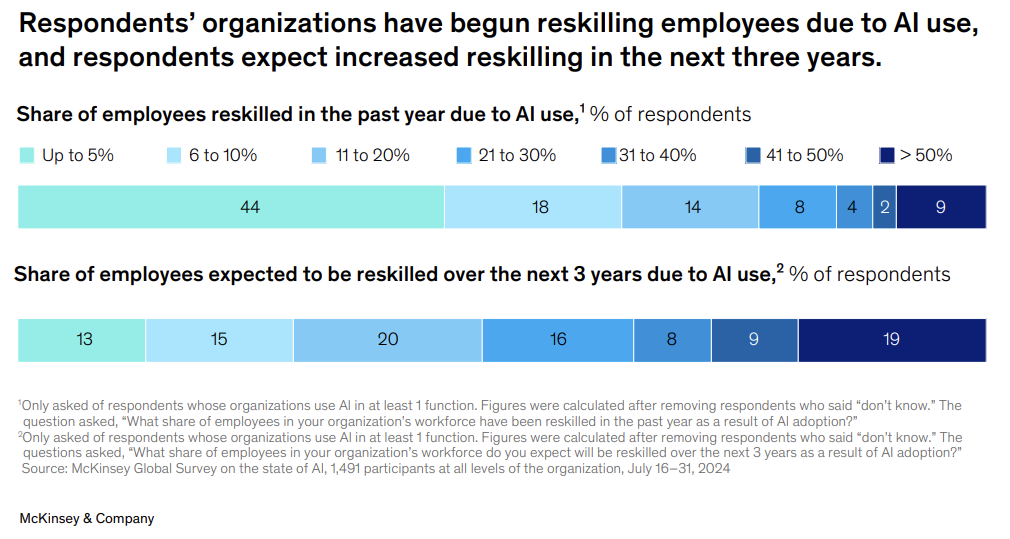

Much of the public conversation around AI centers on job displacement. The survey paints a more complicated picture. While certain functions such as service operations and supply chain management are more likely to experience headcount reductions, many respondents expect no overall workforce change in the next three years. In IT and product development, expectations actually tilt toward headcount increases.

At the same time, organizations are reskilling employees in meaningful numbers and expect significantly more reskilling over the next three years. Half of respondents whose organizations use AI say they will need more AI data scientists in the coming year. Companies are also hiring AI compliance and ethics specialists, roles that did not exist at scale a few years ago.

This suggests reconfiguration rather than simple contraction.

If this were a fragile bubble, we might expect erratic hiring followed by dramatic pullbacks. Instead, we see structured reskilling programs, forward looking talent planning, and role diversification. The labor market is adjusting, but it is adjusting through managed transformation rather than abrupt collapse.

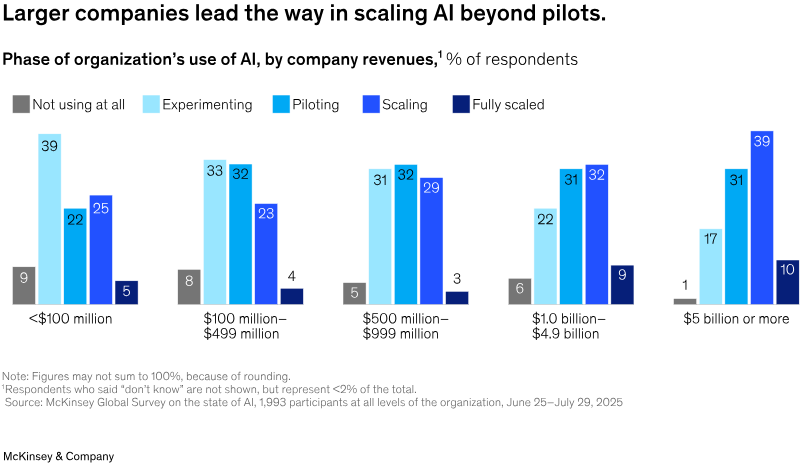

The gap between bigger and smaller companies

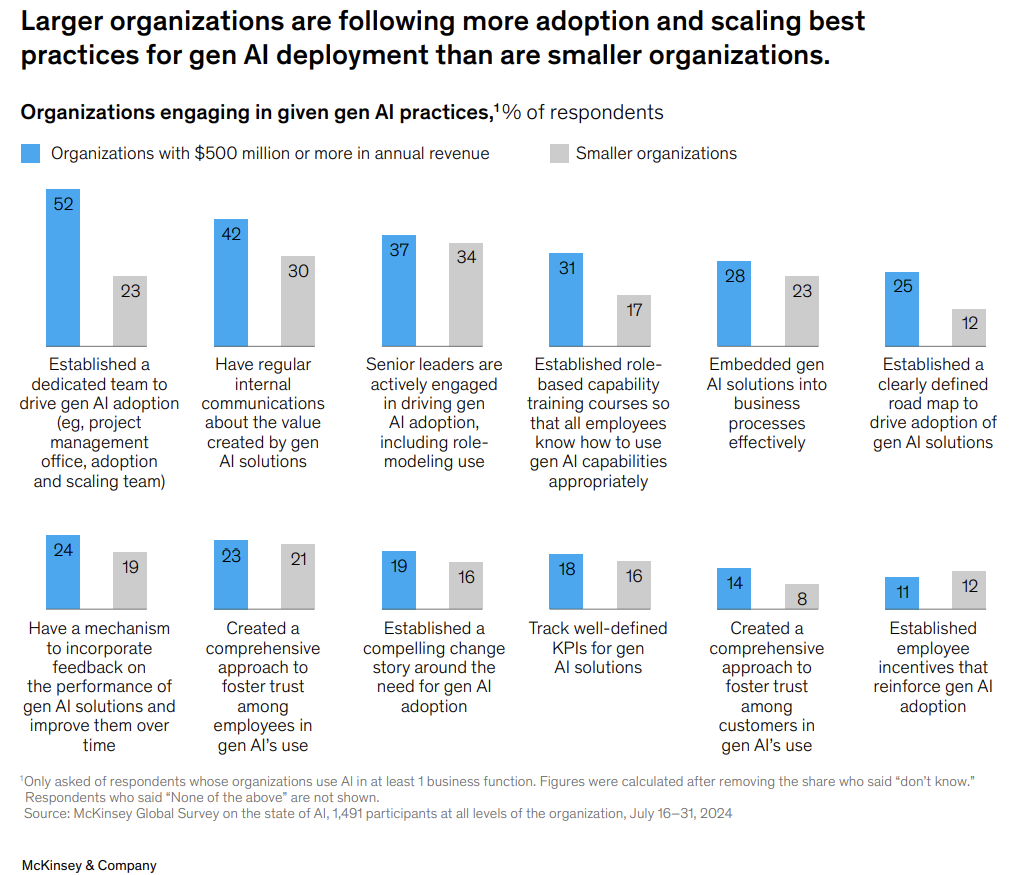

All of this does not mean there is no risk. In fact, the most concerning data point in the report is that less than one third of organizations are following most of the 12 adoption and scaling best practices identified as critical for value creation. Fewer than one in five track well defined KPIs for generative AI solutions.

This is where I see the real danger. The technology is moving quickly and adoption within companies is widespread, however, the management maturity is uneven. Data shows that compared to larger organizations, the smaller organizations that fail to establish road maps, define KPIs, embed solutions into business processes, and actively manage change will struggle to convert experimentation into sustained returns.

That gap can create volatility. While some companies will overestimate their progress, others will underinvest in scaling. In the next few years, this competitive divergence will widen between those that genuinely rewire and those that merely experiment.

Personal Experience: Knowledge/Capability Gap

When I zoom out and look at what's happening, especially through the lens of my own work as a Solutions Engineer Lead, what I see isn't a bubble waiting to burst. What I see is a growing gap between organizations that are serious about change and those that are just experimenting on the surface.

In my day to day work, I've noticed something interesting. The companies that actually see impact from AI are not the ones asking for the flashiest demo or the most advanced model. They are the ones willing to sit through uncomfortable conversations about process redesign, data governance, ownership, and KPIs. They ask questions like, "Who is accountable for this workflow?" or "How will we measure whether this actually improves outcomes?"

On the other hand, I've also seen teams excited to "add AI" to their stack without thinking through change management or how it fits into existing systems. The tool gets integrated, maybe a few people try it, but nothing fundamentally changes. Six months later, leadership wonders why the ROI isn't obvious.

That's why I don't think the biggest risk right now is that AI disappears. The bigger risk is that some organizations never move beyond surface level adoption. Larger enterprises, at least according to the survey, are more likely to centralize governance, build dedicated AI teams, and define clear road maps. In my experience, that structure makes a huge difference because it forces alignment from the top and creates space for proper implementation.

Smaller companies or less mature teams might still adopt AI, but without that structural depth, the impact stays limited. Over time, that difference in execution can compound. The companies that treat AI as a strategic shift will quietly pull ahead, while others remain stuck in pilot mode.

Conclusion

There is hype at the edges of the AI ecosystem. Valuations may fluctuate. Some startups will fail. Some corporate AI initiatives will quietly dissolve when ROI disappoints. That is normal in any major technological transition.

But when I step back from the headlines and the valuation chatter, I can't ignore the fact that something more substantial is happening underneath all of it. Companies are not just experimenting with shiny tools and hoping for magic. They are redesigning workflows, sometimes painfully, sometimes slowly, but deliberately. CEOs are stepping into governance conversations instead of leaving AI buried inside IT departments. Risk management frameworks are being built out, not because it's trendy, but because real consequences have already surfaced.

At the same time, organizations are investing in reskilling programs, adjusting talent strategies, and embedding AI across multiple business functions rather than confining it to isolated pilots. In several business units, leaders are already reporting revenue increases and cost reductions tied to generative AI, even if those gains have not yet translated into dramatic enterprise wide EBIT shifts. That tension between localized value and broader financial impact is exactly what you would expect in the early stages of structural change.

And perhaps that is what makes this moment uncomfortable. We are in the in between phase where expectations are high, execution is uneven, and results are still consolidating. It is tempting to label that uncertainty as a bubble because uncertainty is easier to dismiss than to analyze.

For me, the more interesting question is not whether the AI bubble will collapse, but whether organizations have the leadership discipline, governance maturity, and operational courage to complete the rewiring they have started.

Because if they do, this will not be remembered as a bubble. It will be remembered as a foundational shift in how enterprises are structured.

Thanks for reading! I'm curious to know your own personal thoughts and experiences on this topic! Feel free to connect, send me an email (my inbox is always open) or let me know in the comments! Cheers!

Let's Connect!

* Twitter

* LinkedIn

* GitHub

* ragTech

* WomenDevsSG